After our poster in EvoStar, GECCO 2019 saw another poster on evolution of Angry Birds structures, in this ocassion focused on the inclusion of the Box2D Physics simulation engine into the evolutionary algorithm to save using Science Birds, which improved evaluation of the structures that needed it by 100x.

The poster is minimalistic, with the intention of making it awesome.

//embedr.flickr.com/assets/client-code.js

//embedr.flickr.com/assets/client-code.js

Get data, code and the paper itself from our repository.

Archivo de la categoría: Uncategorized

Exploring concurrent evolutionary algorithms in Perl 6

Last April in Leipzig we presented our paper on concurrent evolutionary algorithms using Perl 6, the new, multiparadigm, language that includes Channel-based parallelism.

- If your university library allows it, download the paper, which is also available from GitHub in Knitr format, along with experimental data and scripts for processing it.

- The paper was presented as a poster. Check it out.

- A brief presentation was also made

- All the code is available from the

examplesrepository of this repo, obviously under a free license.

Perl 6 was proved to be a great testbed for concurrent evolutionary algorithms, using bioinspiration to design the algorithm. Still a lot to be done in that area, though.

Reunión de trabajo de Geneura, del 22 de febrero de 2019

JJ nos expone el trabajo «A Neural Network System for Transformation of Regional Cuisine Style» en nuestra reunión semanal.

Participamos en la II Reunión Internacional de Metabolómica y Cáncer

Hoy impartimos la charla «Minería de datos metabolómicos aplicada a procesos oncológicos: estado del arte, casos de uso y herramientas.»

La II Reunión Internacional de Metabolómica y Cáncer está teniendo lugar en GRanada, concretamente en el hotel ABBA.

Stateless evolutionary algorithms

Most algorithms keep some kind of state: global variable that holds the optimum, a counter of the number of evaluations, some context every piece algorithm must be aware of. However, this might not be the best when we want to create cloud-native algorithms, and it’s not in the case of cloudy evolutionary algorithms. There was a bit of that in GECCO, but as long as I was attending the Perl Conference in Glasgow, and I was using Perl, I kind of switched focus from the evolutionary part (but there was a bit of that too) to the language-design part and talked about evolutionary algorithms in Perl 6. The presentation is linked from the talk description.

Main problem is that you have to create dataflows that allow the algorithm to progress, as well as work efficiently in that kind of concurrent architecture, which is similar to the serverless architecture that is our eventual target.

We’ll be continuing this research in the workshop on engineering applications in Medellín, where my keynote will deal with this same topic.

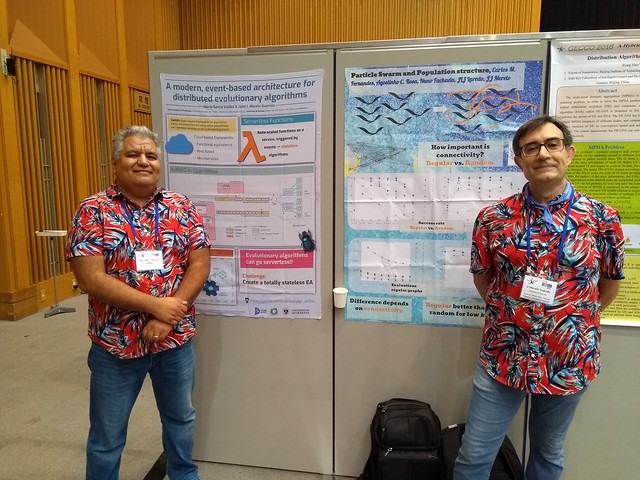

GECCO posters: modern evolutionary algorithms and particle swarm optimization methodologies

Besides the two papers we presented in GECCO workshops, our research group also had a couple of posters in the main track. Posters get a two-page publication that you can find if you want, but probably the posters themselves will be much more informative.

The first one, with Mario García, presented a new (almost) serverless architecture for evolutionary algorithms:

The second paper, with Juanlu García, Carlos Fernandes, present a structured population approach to avoid premature convergence problems with Particle Swarm Optimization algorithms

This last work shows that using a regular population structure is better for low degree of connectivity, but this degree is quite important and has a big influence on the results.

As usual, customers received beautiful origami after listening to the explanation. Visit us next time!

Self-organized criticality in code repositories

The GeNeura team is spread all over the world, and Dr. Juanlu Jiménez is in Le Havre as associate professor. He’s been so kind to invite us to a visit, and here’s the presentation we have made there.

Equipe Réseaux d’interactions et Intelligence Collective

During the last two weeks, we have been enjoying the visit of JJ Merelo at Ri2C team. On May 19th, he was delivering a seminar entitled Self-organized criticality in code repositories, of which you can find the abstract and the presentation next.

Abstract

It’s been known for some time that work in code repositories tend to self-organize and possibly in a self-organized state. What was not known is the conditions for this to happen, and what kind of description of the repository is needed to find these properties. In this talk we describe how a self-organized critical state has been found in a wide variety of repositories, including code or not.

The slides of the presentation are available at: https://jj.github.io/soc-code-repos/#/

Creating Hearthstone decks by using Genetic Algorithms

I’m glad you’re here, friend! There’s a chill outside, so pull up a chair by the hearth of our inn and prepare to learn how the Ancient Gods use the power of the secret and ancient branch of the Evolution to generate Hearthstone decks by means of the magic and mistery!!

Several months ago, my colleague Alberto Tonda and I were discussing about our latest adventures playing the Digital Collectible Card Game Hearthstone, when one of us said «Uhm, Genetic Algorithms usually work well with combinatorial problems, and solutions are usually a vector of elements. Elements such as cards. Such as cards of Hearthstone, the game we are playing right now while we are talking. Are you thinking what I’m thinking?»

Five minutes later we found an open-source Hearthstone simulator and started to think how to address the possibility of automatically evolve decks of Hearthstone.

The idea is quite simple: Hearthstone is played using a deck of 30 cards (from a pool of thousands available), so it is easy to model the candidate solution. With the simulator, we can perform several matches using different enemy decks, and obtain the number of victories. Therefore, we have a number that can be used to model the performance (fitness) of the deck.

Soooo, it’s easy to see one and one makes two, two and one makes three, and it was destiny, that we created a genetic algorithm that generates deck for Hearthstone for free.

Our preliminary results where discussed here, but we wanted to continue testing our method, so we tested using all available classes of the game, with the help of JJ, Giovanny and Antonio. All the best human-made decks were outperformed by our approach! And not only that, we applied a new operator called Smart Mutation that it is based in what we do when we test new decks in Hearthstone: we remove a card, and place another instead, but with +/-1 mana crystals, and not one completely random from the pool. The results were even better. Neat!

Maybe you prefer to read the abstract, that it is written in a more formal way than this post. You know, using the language of the science.

Collectible card games have been among the most popular and profitable products of the entertainment industry since the early days of Magic: The Gathering in the nineties. Digital versions have also appeared, with HearthStone: Heroes of WarCraft being one of the most popular. In Hearthstone, every player can play as a hero, from a set of nine, and build his/her deck before the game from a big pool of available cards, including both neutral and hero-specific cards.

This kind of games offers several challenges for researchers in artificial intelligence since they involve hidden information, unpredictable behaviour, and a large and rugged search space. Besides, an important part of player engagement in such games is a periodical input of new cards in the system, which mainly opens the door to new strategies for the players. Playtesting is the method used to check the new card sets for possible design flaws, and it is usually performed manually or via exhaustive search; in the case of Hearthstone, such test plays must take into account the chosen hero, with its specific kind of cards.

In this paper, we present a novel idea to improve and accelerate the playtesting process, systematically exploring the space of possible decks using an Evolutionary Algorithm (EA). This EA creates HearthStone decks which are then played by an AI versus established human-designed decks. Since the space of possible combinations that are play-tested is huge, search through the space of possible decks has been shortened via a new heuristic mutation operator, which is based on the behaviour of human players modifying their decks.

Results show the viability of our method for exploring the space of possible decks and automating the play-testing phase of game design. The resulting decks, that have been examined for balancedness by an expert player, outperform human-made ones when played by the AI; the introduction of the new heuristic operator helps to improve the obtained solutions, and basing the study on the whole set of heroes shows its validity through the whole range of decks.

You can download the complete paper from the Knowledge-based Systems Journal https://www.sciencedirect.com/science/article/pii/S0950705118301953

See you in future adventures!!!

A better TORCS driving controller presented in EvoStar 2018

Last year, we presented along with Mohammed Salem, from the university of Mascara, in Algeria, our TORCS driving controller. This controller effectively drives a simulated vehicle, considering input from its sensors, and deciding on a target speed and how to turn the steering wheel.

This year, in Evostar 2018 in Parma, we had again our paper accepted for the poster session, which took place in the incredible corridor to the right of these words. The poster included interactive elements, such as a small car used for demonstration on how the driver worked.

And it works really well, or at least better than the previous versions. The key element was the design of a new fitness function that includes damages, and also terms related to speed. Still some way to go; in the near future we will be posting our new results in this area.

The book of proceedings can be downloaded from Springer. Our paper is in page 342 and you can also download just the paper from here, but we do open science, so you can follow our writing process and download the paper from this GitHub repository too

Detección y predicción de flujos de personas y vehículos

En el marco del congreso CIMAS 21, que se celebrará en Granada, haré una presentación sobre las posibilidades de nuestro sistema de detección de tramas WiFi y Bluetooth, del que ya hemos hablado varias veces.

La presentación se centrará en los aspectos más analíticos de la plataforma, viendo las posibilidades que puede tener para un destino turístico con énfasis deportivo.